Module 6: Online and AI-facilitated GBV

Exploring how gender-based violence manifests in digital spaces and how AI can both amplify and help prevent harm.

Chapter Overview

Welcome to this module on Online and AI-facilitated GBV. This module explores the ways gender-based violence (GBV) occurs in online spaces and examines the dual role of Artificial Intelligence (AI) in both enabling and combating these harms.

Chapter’s Learning Objectives

By the end of this module, you will be able to:

- Identify the main forms of online and AI-facilitated GBV.

- Understand the online–offline continuum and how abuse can escalate.

- Analyse the dual role of AI in perpetuating and mitigating GBV.

- Apply strategies for digital safety and support in youth work.

💻 Forms of Online Gender Based Violence

In today’s digital world, the internet is central to how young people connect, communicate, and explore who they are. But alongside these opportunities, digital spaces also bring serious risks—especially for girls, young women, LGBTIQA+ youth, and other marginalised groups. Technology is not neutral. It can be used to support, but also to control, shame, or harm.

Online gender-based violence (GBV) refers to harmful actions carried out through digital technologies that target people based on their gender, gender identity, or sexual orientation. According to the European Institute for Gender Equality (EIGE), over half of young women (52%) across the EU have experienced some form of online violence—including threats, harassment, and the non-consensual sharing of intimate images (EIGE, 2022). LGBTIQA+ youth, racial minorities, and youth with disabilities are also disproportionately targeted.

Some forms of online GBV are loud and public, like hateful comments or manipulated images. Others are hidden or disguised as “normal,” such as controlling messages in a relationship or a stranger building trust online for abusive reasons. But all forms of online GBV can have deep emotional, psychological, and even physical impacts. They can escalate offline—and they often do.

This section introduces seven key forms of online GBV that youth workers and educators should be able to recognise. Each one is explained with simple definitions and concrete examples drawn from platforms young people actually use—like WhatsApp, TikTok, Discord, or Instagram. Knowing the signs is the first step to preventing harm and offering support.

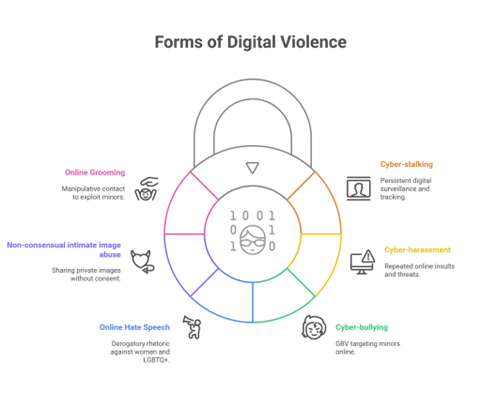

1. Cyber-stalking

Using digital tools to monitor someone’s activity or location without consent. This may include tracking someone through GPS apps like Snap Map, checking their online status obsessively, or using shared passwords to access their messages.

Example: A teen’s ex continues to track her location on Snap Map even after she ends the relationship and blocks him on Instagram.

2. Cyber-harassment

Repeated, unwanted messages that are aggressive, threatening, sexual, or humiliating. This often happens in DMs, group chats, or through anonymous accounts, and can escalate quickly.

Example: A student receives daily voice notes from a classmate calling them names and threatening to expose personal secrets.

3. Cyber-bullying

A pattern of targeted online cruelty toward a minor, usually from peers. It can include memes, impersonation, public shaming, or exclusion from digital spaces (e.g., WhatsApp groups, private stories).

Example: A group spreads a photoshopped meme about a classmate’s body, tagging her in TikTok comments.

4. Online gender-based hate speech

Abusive or threatening language directed at someone based on their gender, sexual orientation, or identity. Often normalised through irony, memes, or jokes, but deeply harmful.

Example: A nonbinary youth is repeatedly misgendered in a Discord server and told to “go off yourself” after sharing their pronouns.

5. Non-consensual intimate image abuse (sometimes called “revenge porn”)

Sharing or threatening to share intimate images or videos without the subject’s consent. This includes real photos as well as AI-manipulated content like deepfakes.

Example: Someone leaks a nude photo sent in confidence, or creates a deepfake video and circulates it on anonymous forums.

6. Online grooming

When someone intentionally builds trust with a minor over time through online contact, usually to manipulate or exploit them sexually. Groomers often pretend to be peers or offer validation, gifts, or praise.

Example: A 14-year-old girl playing online games is invited to private chats by someone who flatters her and later asks for photos and secrets.

Note: Grooming often doesn’t start with threats—it begins with friendliness. Young people may not realise they’re being manipulated.

7. Doxxing

The public release of someone’s personal information (name, address, phone number, school) to intimidate or incite others to target them. Doxxing often follows online disagreements, activism, or coming out stories.

Example: After a youth posts about LGBTIQA+ rights on TikTok, a troll account leaks their home address in the comments.

Each form of online GBV may appear in different ways, but all can impact a young person’s mental health, confidence, safety, and freedom. Youth workers, educators, and peer allies play a vital role in recognising red flags, creating safer digital environments, and helping young people know what’s not OK. Figure 1 summarises some forms of online GBV.

| Form | Definition | Illustrative Evidence | Youth Work Practice | Example Scenario |

|---|---|---|---|---|

| Cyber-stalking & digital surveillance | Persistent, unwanted monitoring using apps, GPS, or logins to track someone’s movement or activity online. | Rogers et al. (2022) identify common tactics including spyware, shared cloud passwords, and “Find My Phone” exploitation. | Ask: Are young people aware of hidden apps or shared credentials? Promote tech check-ups and digital boundaries. | A teen reports her ex still knows her whereabouts after break-up, despite blocking him. |

| Cyber-harassment | Repeated sending of threats, insults, or degrading content via digital platforms. | Henry & Powell (2018) show that online abuse often mimics traditional IPV patterns, especially in romantic breakups. | Support youth in saving evidence, reporting on platforms, and managing emotional fallout. | A student receives abusive voice notes daily from a classmate in a group chat. |

| Cyber-bullying | Harassment or humiliation of minors online, often in school or peer contexts. | Stonard et al. (2014) highlight how tech extends bullying from school hours into private spaces. | Encourage group norms in peer spaces; use roleplay to build empathy and intervention skills. | A group of girls make memes about another student’s body and share them on Snapchat. |

| Online gender-based hate speech | Aggressive, demeaning rhetoric targeting someone due to their gender or identity. | Powell et al. (2022) describe how hate speech targets women and LGBTIQA+ people through layered attacks (e.g., combining sexism and homophobia). | Prepare youth to recognise and respond to hate, not just ignore or “scroll past.” | A nonbinary teen is repeatedly misgendered and called slurs on a gaming Discord server. |

| Non-consensual intimate image abuse (“revenge porn”) | Sharing private images or videos without consent, often by ex-partners or peers. | Lippman & Campbell (2014) show that teens feel pressured to share nudes, and these images often get reshared without consent. | Teach youth about legal rights, consent, and emotional consequences of image sharing. | A boy threatens to post his ex’s photo unless she agrees to talk to him again. |

| Online grooming | Manipulating a minor into trust or dependency for sexual purposes—online or offline. | Wolak et al. (2008) found most grooming starts with flattery and builds gradually into manipulation. | Emphasise how abusers build trust, not just use threats; build youth media literacy. | A 15-year-old is invited into a private chat by a stranger promising gaming rewards. |

🤖 The Role of Artificial Intelligence in Gender-Based Violence

Artificial Intelligence (AI) is changing how we communicate, interact, and access information—especially in digital spaces where young people spend much of their time. But alongside its benefits, AI is also reshaping how harm can occur, including gender-based violence (GBV).

This unit explores how AI technologies can be used both to facilitate abuse and to support prevention and response efforts. It offers practical insights for youth workers and educators seeking to understand the tools, risks, and opportunities AI brings to their practice.

The discussion also highlights the ethical challenges of relying on automated systems in sensitive contexts, and reflects on the limitations of current datasets and design approaches, especially those that lack input from young people or marginalised communities.

Importantly, AI-facilitated violence should not be seen as something entirely new. It represents a continuation of gender-based violence through new digital channels, rather than a distinct or isolated form. As part of the online-offline continuum of GBV, many of the harms we now see such as surveillance, coercion, or image-based abuse mirror offline patterns of power and control. Technology has not created these behaviours, but it has amplified, automated, and scaled them, often in ways that are harder to detect or respond to.

Artificial Intelligence can be a powerful tool—but like many technologies, it can also be misused. In the context of GBV, AI has enabled new methods for perpetrators to harass, shame, or control others, particularly in digital spaces where young people are most active.

One of the most disturbing developments is the use of deepfakes—AI-generated images or videos that place someone’s face into a pornographic scene without their consent. These fakes are often shared on social media or encrypted messaging apps. Even when they are clearly fabricated, victims—often girls, young women, and LGBTIQA+ youth—report feeling humiliated, fearful, and socially isolated (Umbach et al., 2024).

Another frequent misuse of AI is the creation of harassment bots: automated accounts programmed to send violent, demeaning, or threatening messages at scale. These bots often target youth activists, influencers, or anyone speaking publicly about gender, sexuality, or justice issues. The sheer volume of messages can make the abuse feel relentless and impossible to escape (Powell et al., 2022).

AI can also play a role in harm without direct malicious intent. Platform algorithms that prioritise content with high engagement tend to boost posts that provoke anger or outrage. This means misogynistic or transphobic content often reaches more people—not because platforms want to promote it, but because their AI systems are designed to optimise attention, not safety (Henry & Powell, 2018).

Lastly, AI-driven technologies used in smart homes, wearable devices, or mental health apps may be turned into tools of surveillance. An abuser could use these systems to monitor someone’s location, access private messages, or interpret emotional states without consent. Victims may not even be aware that they are being tracked (Powell et al., 2022).

These risks highlight a key message: AI does not cause abuse, but it can be weaponised by those with harmful intentions. For youth workers, social workers, and educators, understanding how these technologies function—and how they intersect with pre-existing patterns of offline violence—is essential for protecting and empowering young people, as shown in Table 2.

| Abuse Method | Description | Real-World Example | Reference |

|---|---|---|---|

| Deepfakes | AI-generated images or videos that swap someone’s face into sexual content | A teen finds a pornographic video circulating in which her face is superimposed using deepfake tech | Umbach et al. (2024) |

| Harassment bots | Automated accounts that spam threatening or degrading content, often targeting activists or marginalised groups | A young female climate activist receives hundreds of AI-generated slurs across platforms after a public talk | Powell et al. (2022) |

| Algorithmic amplification of hate | Platform algorithms prioritise hateful or inflammatory posts for engagement, exposing targets to mass abuse | LGBTIQA+ influencers report surges of hate after posting about Pride, due to recommendation algorithms | Henry & Powell (2018) |

| Data exploitation | AI systems leak private data via “smart” devices or infer sensitive information without consent | A smart speaker in a teen’s home records private arguments and transmits the logs to a cloud server without consent | Powell et al. (2022) |

While AI technologies can be misused to cause harm, they also hold significant potential to support prevention, early detection, and survivor support in cases of gender-based violence (GBV). When developed and applied ethically, AI can enhance safety and improve access to support services for individuals affected by online abuse.

One of the most effective uses of AI is in detecting and removing harmful content. Tools that use image recognition, such as those modelled after Microsoft’s PhotoDNA, are already helping platforms identify and block known child sexual abuse material (CSAM). These systems compare images uploaded by users against secure databases of previously reported abuse content. Although these tools cannot prevent new images from being created, they are an important safeguard against the spread of harmful material (UNICEF, 2022).

AI is also being used to power chatbots and virtual assistants that help survivors find the support they need. These tools are especially helpful for young people who may feel unsure about talking to someone in person. Chatbots can guide users through questions about their experiences and connect them with appropriate mental health, legal, or emergency services. When designed with trauma-informed principles and available in multiple languages, they can provide a safe first step toward help (Musharu et al., 2025).

Natural Language Processing (NLP), a form of AI that helps computers understand human language, is being used to detect and block hate speech. Platforms like X (formerly Twitter), Instagram, and TikTok are increasingly using NLP models to scan posts for abusive or threatening language and take action. Although these tools are not perfect, they help reduce the volume of visible harm and can protect users from the psychological effects of targeted harassment (Powell et al., 2022).

There are also early trials of AI used in prevention and early warning systems. Some apps analyse user behaviour, such as the tone of messages or frequency of contact, to detect patterns that may indicate risk. In some domestic violence settings, AI tools are being explored to help identify high-risk situations before physical harm occurs. These predictive models must be used with care to avoid false positives or breaches of privacy, but they show promise in improving early intervention (Henry & Powell, 2018).

What makes these tools useful is not only the technology behind them, but how they are introduced, explained, and governed. Tools that are co-designed with youth, survivors, and community organisations are more likely to reflect real-world needs and be trusted by users (see Table 3).

| AI Application | Description | Example | Reference |

|---|---|---|---|

| Image detection | AI tools detect known or suspected abusive content (e.g., non-consensual nudes) and flag/remove it from platforms | Meta uses PhotoDNA-style tools to block uploads of known child sexual abuse materials (CSAM) | UNICEF (2022) |

| Triage chatbots | AI-driven bots guide survivors to the right services, 24/7, with trauma-informed flows | A youth accesses a support chatbot on WhatsApp to find mental health support in their language | Musharu et al. (2025) |

| NLP for hate detection | Natural language processing (NLP) is used to flag misogynistic or transphobic posts automatically | Twitter deploys content classifiers trained on hate speech datasets | Powell et al. (2022) |

| Predictive policing or early warning | In some trials, AI is used to analyse risk patterns in abusive behaviour to trigger alerts or prevention | Prototype apps assess escalation patterns in DV cases using SMS tone + frequency | Henry & Powell (2018) |

As AI is increasingly used in the fight against gender-based violence (GBV), it is essential to understand not only its capabilities, but also its limitations and risks. AI tools are not neutral. They are shaped by the data they are trained on, the goals of their developers, and the social environments in which they are deployed. Without proper oversight, these tools may unintentionally reinforce inequality, expose survivors to new forms of harm, or fail to protect those they are designed to help.

One major concern is bias in AI models. Many systems used for detecting hate speech, abusive messages, or harmful images are trained on data that underrepresents certain groups or languages. As a result, abuse targeting women of colour, LGBTIQA+ individuals, or non-Western users may go undetected. In some cases, legitimate expressions of anger or frustration by survivors have been mistakenly flagged as abusive, while actual threats remain online (Powell et al., 2022).

Another risk is the lack of transparency in how AI tools make decisions. Survivors may not know why a post was taken down or why a chatbot gave a certain response. This “black box” problem reduces trust in AI systems and limits accountability. For young people, who are often less familiar with their digital rights, it can lead to confusion or frustration when they try to seek help online (Henry & Powell, 2018).

Surveillance misuse is also a growing issue. AI tools designed to protect users, such as location trackers or emotion recognition software, can be weaponised by abusers. If safety apps do not have strict privacy protections, they can be used to monitor or control victims instead of helping them. This is especially dangerous in domestic violence situations where technology is already part of the pattern of coercive control (UNESCO, 2023).

The lack of youth participation in designing these tools is another critical gap. Young people often have unique experiences of online abuse, but they are rarely consulted in the development of digital safety solutions. This can result in tools that are poorly adapted to their needs, use confusing language, or miss important context such as slang, cultural norms, or platform-specific risks (Musharu et al., 2025).

Lastly, there is a lack of longitudinal data about what actually works. Many AI-based interventions are pilot projects or commercial products with limited transparency. Without independent evaluation and publicly available data, it is difficult to assess whether these tools reduce harm over time or merely shift the problem to other platforms or populations.

- Does the tool respect privacy? Are data stored securely, and can youth use it without sharing sensitive information?

- Is the language accessible to all youth? Does the tool avoid jargon and account for differences in age, background, and digital literacy?

- Are there risks of reinforcing stereotypes? Does the system recognise diverse identities and avoid gender, racial, or cultural bias?

- Is it transparent about how it works? Can users understand how decisions are made and what happens to their data?

- Was it designed with youth input? Have young people tested or helped shape this tool to make it relevant and inclusive?

| Ethical Challenge | Description | Implication |

|---|---|---|

| Lack of transparency | Many AI tools operate as “black boxes,” and it is unclear how decisions are made | Survivors may not trust automated tools or understand how their data is used |

| Bias and misclassification | AI may reflect societal bias, failing to detect abuse toward marginalised users | NLP systems may miss hate against non-Western gender identities |

| Surveillance risks | Tools meant for protection may be exploited for stalking or abuse | Location-tracking or emotion-detection features may be turned against victims |

| Lack of youth voice in design | Few AI tools are co-designed with young people, leading to mismatches in usability or safety | Chatbots may overlook local slang or emotional nuances that matter to youth |

🛡️ Safe Online Practices

This unit introduces practical strategies for navigating and supporting safer online experiences in the face of gender-based violence (GBV). It explores how youth workers can help reduce the risk of harm by strengthening digital safety habits, responding effectively to incidents of online abuse, and encouraging respectful, empowering communication. The unit also looks at how positive peer norms and bystander actions can shift online cultures toward greater safety and inclusion. Finally, it provides guidance on using platform-specific tools to report abuse, safeguard privacy, and support the mental well-being of young people in digital spaces.

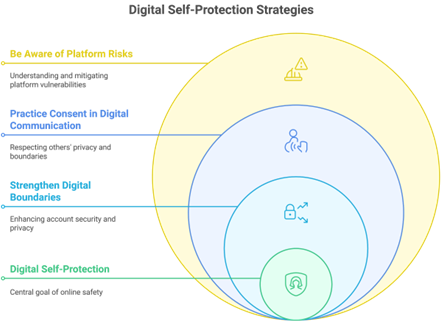

In an increasingly digital world, safeguarding personal information and maintaining privacy is paramount. This section outlines essential strategies for individuals to enhance their digital self-protection. By implementing these core strategies, users can create stronger digital boundaries, practice consent in their communications, and remain aware of the risks associated with various platforms.

1. Strengthen Digital Boundaries

- Use strong, unique passwords: Ensure that all major accounts are secured with complex passwords that are not easily guessable. Consider using a password manager to keep track of them.

- Activate two-factor authentication: This adds an extra layer of security by requiring a second form of verification, such as a text message or authentication app, in addition to your password.

- Avoid sharing live locations: Refrain from posting real-time locations or sensitive personal data in public forums, as this can expose you to unwanted attention or risks.

- Conduct regular privacy check-ups: Regularly review your privacy settings on social media platforms, focusing on location sharing, tagging permissions, and follower lists to ensure that your information is only accessible to trusted individuals.

2. Practice Consent in Digital Communication

- Seek permission before sharing: Always ask for consent before sharing images or videos that include other people. This respects their privacy and autonomy.

- Respect boundaries in messaging: Before forwarding sensitive content in group chats or messages, check in with the original sender to ensure they are comfortable with it being shared.

- Model healthy online relationships: Promote positive interactions by avoiding manipulative language or pressure in your communications, fostering a respectful digital environment.

3. Be Aware of Platform Risks

- Know the risks of different apps: Familiarise yourself with which applications have weak moderation policies or allow for anonymous abuse, as these can pose significant risks to users.

- Learn how to manage content: Understand how to block, mute, or report harmful content on major platforms to protect your online experience.

- Utilise safety features: Take advantage of safety features offered by platforms, such as keyword filters, content warnings, and follower limits (e.g., using Instagram’s “Close Friends” feature) to curate a safer online space.

By adopting these strategies, individuals can significantly enhance their digital self-protection and foster a safer online environment for themselves and others.

Read each scenario below and choose the most appropriate youth worker action. After you choose, the correct answer and a short explanation will appear. This is purely informational—no scores are saved.

- DeRiggi, M., Henry, N., & Powell, A. (2023). Digital bystanders: What works to support victims of technology-facilitated GBV. Youth & Society. https://doi.org/10.1177/0044118X231163511

- Henry, N., & Powell, A. (2018). Technology-facilitated sexual violence: A literature review of empirical research. Trauma, Violence, & Abuse, 19(2), 195–208. https://doi.org/10.1177/1524838016650189

- Lippman, J. R., & Campbell, S. W. (2014). Damned if you do, damned if you don’t… if you’re a girl: Relational and normative contexts of adolescent sexting in the United States. Journal of Children and Media, 8(4), 371–386. https://doi.org/10.1080/17482798.2014.923009

- Musharu, T., Gomez, J. M., Rosales, M., & Navarro Soria, I. (2025, June). AI and open data for GBV prevention among youth: Ethical governance and policy frameworks in the EU. In Proceedings of the 14th BUIS-Tage Conference on Smart and Sustainable Infrastructures. Oldenburg, Germany.

- Powell, A., Henry, N., Flynn, A., & Sugiura, L. (2022). Online, always: A scoping review of technology-facilitated abuse and gender-based violence in the Global North. Computers in Human Behavior, 129, 107131. https://doi.org/10.1016/j.chb.2021.107131

- Rogers, M. M., Burke, J. G., & Earp, J. A. L. (2022). Technology-facilitated abuse in intimate relationships: A scoping review. Trauma, Violence, & Abuse, 24(4), 2210–2226. https://doi.org/10.1177/15248380221093133

- Stonard, K. E., Bowen, E., Lawrence, T. R., & Price, S. A. (2014). The relevance of technology to the nature, prevalence and impact of adolescent dating violence and abuse: A research synthesis. Aggression and Violent Behavior, 19(4), 390–417. https://doi.org/10.1016/j.avb.2014.06.005

- Umbach, R., Valdez, A., & Dobberstein, D. (2024). Non-consensual deepfake pornography: Evidence from ten countries. Proceedings of CHI ’24. https://doi.org/10.1145/3491102.3517575

- UNESCO. (2023). Exposing technology-facilitated gender-based violence in an era of generative AI. https://unesdoc.unesco.org/ark:/48223/pf0000386461

- UNICEF. (2022). Safer Chatbots: Implementation Guide. https://www.unicef.org/media/114681/file/Safer-Chatbots-Implementation-Guide-2022.pdf

- Wolak, J., Finkelhor, D., Mitchell, K. J., & Ybarra, M. L. (2008). Online “predators” and their victims: Myths, realities, and implications for prevention and treatment. American Psychologist, 63(2), 111–128. https://doi.org/10.1037/0003-066X.63.2.111